Introduction

Hello World! It has been a few months since I’ve posted anything here, due to taking on a new role at work and having to learn new skills for it.

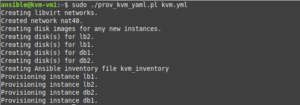

In a previous post, I described writing a Perl provisioning script for creating KVM virtual machines. The list of machines and attributes are defined in a YAML file, and then “spun up” using virt-install and cloud-init. It performs a similar role to Terraform, but is simpler and more focused. Mainly I wrote it to improve my Perl skills and learn more about libvirt, not replace Terraform entirely.

However, I later realized that the usefulness of this script is diminished by the fact that it can’t create libvirt NAT networks or provision virtual machines with static IP addresses. My first version used Dnsmasq for assigning addresses to the VMs; I’ve now soured on this approach. Instead, I’ve decided to re-factor this script to use cloud-init to assign a static IP address to the VM. This post will talk about the rewrite of the script.

KVM host setup

For this exercise I started with fresh install of Debian 13/trixie. Other distributions that can run libvirt/KVM, such as Enterprise Linux or Ubuntu, will work as well. After installing the OS, I installed KVM using the below Ansible playbook. I won’t discuss how to configure bridged networking, as I have covered that in my previous post on KVM; this post will focus on libvirt internal networking. However, this script will work with bridged networking as well.

---

- hosts: kvm

tasks:

- name: Check if virtualization extensions are enabled

ansible.builtin.shell: "grep -q -E 'svm|vmx' /proc/cpuinfo"

register: grep_result

- name: Fail if virtualization extensions are disabled

ansible.builtin.fail:

msg: 'Virtualization extensions are disabled'

when: grep_result.rc > 0

- name: Install required packages

ansible.builtin.apt:

name:

- bridge-utils

- libguestfs-tools

- libosinfo-bin

- libtemplate-perl

- libvirt-daemon

- libvirt-daemon-system

- libyaml-libyaml-perl

- qemu-kvm

- virtinst

state: present

install_recommends: false

You can also install these packages with apt: sudo apt install ‐‐no-install-recommends bridge-utils libguestfs-tools libosinfo-bin libtemplate-perl libvirt-daemon libvirt-daemon-system libyaml-libyaml-perl qemu-kvm virtinst.

The revised provisioning script

The script is still written in Perl and uses the Template::Toolkit and YAML::XS modules. The package names for these in Debian are libtemplate-perl and libyaml-libyaml-perl. In this version of the script, I’ve removed the ability to create DHCP reservations with Dnsmasq. My original reason for choosing this approach was to have the ability to change a VM’s IP address without having to modify the network configuration inside the VM. However, it became a hassle to manage leases files and there is no documented method of deleting leases from libvirt networks. Network configuration is now performed with cloud-init and the VMs are assigned static IP addresses if defined in the YAML file. Dnsmasq can still be configured for bridged networks, but it only assigns DNS records for the hosts and not DHCP reservations. Unlike in the previous version, Dnsmasq configuration is disabled by default. Below is a list of the files required to run the script:

- A project.yml or project.yaml file. This is the file that contains the YAML parameters for the script. You can call it whatever you want, as long as it has a .yml or .yaml extension. I named mine db.yaml. The project name will be taken from the filename without the extension.

- ansible_inventory.tt. This is a Template-Toolkit template file to parse an Ansible inventory file from the YAML. You won’t need it unless ansible_inventory is set to 1 in the YAML file. The parsed file will be named <project>_inventory.

- dnsmasq_hosts.tt. This is a Template-Toolkit template file to parse a dnsmasq configuration file with DNS records of the hosts. The parsed file will be located in /etc/dnsmasq.d/99_<project>.conf. You won’t need it unless dnsmasq is set to 1 in the YAML file.

- network-config.tt. This is a Template-Toolkit template file to create a cloud-init network configuration file that will assign a static IP to the VM during provisioning. You won’t need it if all of the VMs are using DHCP

- user-data file for cloud-init.

- ProvVMs.pm. This contains the subroutines for the script.

- prov_kvm_yaml.pl: the script itself.

The code snippets for the three template files are below. They receive variables from ProvVMs.pm:

[% FOREACH host IN ansible_hosts -%] [% host.key %][% IF host.value.ip != '' %] ansible_host=[% host.value.ip %][% END %] [% END -%] [% FOREACH group IN ansible_groups -%] [% "[" %][% group.key %][% "]" %] [% FOREACH member IN group.value.sort -%] [% member %] [% END -%] [% END -%]

# DNS records [% FOREACH host IN hosts.pairs -%] address=/[% host.key %]/[% host.value %] [% END -%]

version: 1

config:

- type: physical

name: [% nic_id %]

subnets:

- type: static

address: '[% ip %]'

netmask: '255.255.255.0'

gateway: '[% gateway %]'

- type: nameserver

address:

- '[% dns %]'

search:

- '[% domain %]'

Next is a summary of the .yaml/yml file. The file will consist of optional single-value global parameters, an instances hash that defines the virtual machines and machine parameters, and—in the new version—an optional networks hash containing libvirt networks. First, the list of global parameters you can set:

- ansible_inventory. Set to 1 to parse an Ansible inventory file (<project>_inventory).

- default_network: the default libvirt network to use. Note: the bridge value from default_network overrides the value of default_bridge.

- default_bridge: the default network bridge to use. Default is br0 if neither this nor default_network are set.

- default_disk_size (in GB). If not specified, defaults to 10.

- default_image: full path to the default cloud image to use. There is no default set for this and if you choose not to set it, you will need to set the image: <image> parameter for each instance. Therefore, setting this parameter is recommended.

- default_os_type: the OS type to use for virt-install, such as debian13. The default value is linux2020. The full list of OS types can be retrieved with the command osinfo-query os. The value is also validated against this list.

- default_ram (in MB). If not specified, defaults to 1024.

- default_user_data_file: specify the path to an alternative default user-data file for cloud-init. By default it uses the file user-data in the same directory as the script.

- default_vcpu: default number of virtual CPUs. Default is 1.

- disk_path: the default directory where disk images are stored for all instances. Default is /var/lib/libvirt/images.

- dnsmasq. Default is 0. Set to 1 to enable dnsmasq.

- domain: the default domain for all instances. Default is localdomain. This is only used for dnsmasq, Ansible inventory, and libvirt networking.

Below is a code snippet with each parameter set. As noted previously, all of these are optional, but some are recommended.

--- ansible_inventory: 1 default_network: nat40 default_bridge: virbr40 default_disk_size: 20 default_image: /var/lib/libvirt/images/debian-13-generic-amd64.qcow2 default_os_type: debian13 default_ram: 2048 default_vcpu: 1 disk_path: /var/lib/libvirt/images dnsmasq: 1 domain: example.net

Now for the instances section of the YAML file. For each instance, the below optional parameters can be set to override the defaults:

- ansible_groups: an array/list of Ansible groups to add the machine to.

- autostart: set to 1 if the instance should be started when the host is booted.

- bridge: the network bridge for the instance. bridge overrides network, unlike with the default value.

- disk: a size in GB for the main OS disk.

- disk_path: a path to the directory where the instance’s disk(s) will be stored.

- dns: set a different DNS server than <subnet>.1.

- domain: the instance’s domain.

- gateway: set a different default gateway than <subnet>.1.

- image: the path to an OS cloud image to use.

- ip: set a static IP for the host. If undefined, the VM will use DHCP.

- mac: a MAC address for the instance. Useful if you’re managing a DNS reservation elsewhere.

- network: set the libvirt network for the host. The network must be in the networks hash (see below). If using a libvirt network that isn’t managed by the script, set the bridge parameter instead.

- os_type: the OS type for virt-install, such as debian13. The complete list of OS types can be obtained with the command osinfo-query os.

- ram: the RAM size in MB.

- skip: set to 1 to skip over an instance. This ensures that it won’t get deleted by mistake.

- user_data_file: the path to an alternative cloud-init user-data file.

- additional_disks: a hash/dictionary for specifying additional disks. Each accepts three parameters: size (in GB, required) and path (defaults to the same path as the OS disk).

Below is a sample code snippet with all of these parameters set and none of them set:

---

instances:

db1:

autostart: 1

bridge: virbr10

dns: 1.1.1.1

ip: 192.168.10.10

ram: 4096

disk: 20

disk_path: /nfs/kvm

domain: db.example.net

gateway: 192.168.10.5

image: /nfs/kvm/noble-server-cloudimg-amd64.qcow2

mac: 00:11:22:33:44:dd

network: vlan10

os_type: ubuntu24.04

user_data_file: /usr/local/etc/user-data_mysql

additional_disks:

data:

size: 30

path: /nfs/mysql

ansible_groups:

- db

web1: {}

Finally, the networks section of the YAML file. For each network, two parameters are required, bridge and net:

- bridge: the interface name. Usually something like virbr4, etc. will work. The script will error out if the name is already in use.

- mode: default is nat. Set to isolated if you don’t want libvirt to forward traffic.

- net: the first three octets of the subnet, such as 192.168.1.

Here is a code snippet with some parameters set. It is for provisioning a two DB servers and two web servers:

---

default_network: nat40

default_image: /nfs/images/debian-13-generic-amd64.qcow2

default_os_type: debian13

domain: ridpath.lab

ansible_inventory: 1

instances:

db1:

ip: 192.168.40.30

ram: 2048

disk: 20

user_data_file: /usr/local/etc/user-data_mysql

additional_disks:

data:

size: 30

ansible_groups:

- db

db2:

ip: 192.168.40.31

ram: 2048

disk: 20

user_data_file: /usr/local/etc/user-data_mysql

additional_disks:

data:

size: 30

ansible_groups:

- db

web1:

ip: 192.168.10.33

network: dmz

ram: 1024

disk: 10

ansible_groups:

- web

web2:

ip: 192.168.10.34

network: dmz

ram: 1024

disk: 10

ansible_groups:

- web

networks:

nat40:

bridge: virbr40

net: 192.168.40

dmz:

bridge: virbr10

net: 192.168.10

The user-data file for cloud-init is unchanged from the previous version:

#cloud-config

users:

- name: ansible_user

passwd: $6$7JnhIhkmDNu4rkr8$1NPhvMbqW.dsPmQBgQ6fIbptd2mqw49byvMdjIldnlg.AW44PR7YNa0esI9lXCS2PY8XIIEqdY4.kBmyvQUuJ.

ssh_authorized_keys:

- ssh-ed25519 pubkey1 mattpubkey1

- ssh-ed25519 pubkey1 mattpubkey1

groups: sudo

sudo: ALL=(ALL) ALL

shell: /bin/bash

lock_passwd: false

ssh_pwauth: true

ssh_deletekeys: true

chpasswd:

expire: false

users:

- name: root

password: $6$7JnhIhkmDNu4rkr8$1NPhvMbqW.dsPmQBgQ6fIbptd2mqw49byvMdjIldnlg.AW44PR7YNa0esI9lXCS2PY8XIIEqdY4.kBmyvQUuJ.

The module file for the script that contains the subroutines, ProvVMs.pm, has changed a bit. The Dnsmasq leases file subroutine has been removed and subroutines for libvirt networking have been added. I’ve also cleaned up a few things, such consolidating frequently-used code into subroutines, etc. I’m not a good Perl programmer, but I like to think that I’m improving.

use strict;

use warnings;

use Template;

package ProvVMs;

require Exporter;

our @ISA = qw(Exporter);

our @EXPORT = qw(mac_gen validate_ip get_subnet check_bridge check_image create_ci_iso create_ci_net_config osinfo_query parse_ansible_inventory parse_hosts_file parse_xen_conf add_libvirt_net del_libvirt_net);

# Generate a random MAC address.

sub mac_gen {

my @m;

my $x = 0;

while ($x < 3) {

$m[$x] = int(rand(256));

$x++;

}

my $mac = sprintf("00:16:3E:%02X:%02X:%02X", @m);

return $mac;

}

# Regex-validate an IP address.

sub validate_ip {

my $ip = $_[0];

unless ($ip =~ /^(\d{1,3}\.){3}\d{1,3}$/) {

die "$ip failed regex IP test. Exiting.\n";

}

}

# Return first three octets of IP address.

sub get_subnet {

my $ip = $_[0];

my @ip_split = split(/\./, $ip);

return join('.', @ip_split[0..2]);

}

sub parse_tt {

my($template, $vars, $file) = @_;

die "Template file $template not found!\n" unless (-f $template);

my $tt = Template->new();

$tt->process($template, $vars, $file) || die $tt->error;

}

# Check if a bridge interface is valid.

sub check_bridge {

my $bridge = $_[0];

unless ($bridge =~ /^(vir|vm)?br\d{1,4}$/) {

die "Bridge interface identifier invalid. It must be like br0, virbr0 etc.\n";

}

unless (-x '/usr/sbin/brctl') {

die "bridge-utils are missing!\n";

}

my $bridge_found = 0;

open(BRCTL, '/usr/sbin/brctl show |') || die "brctl show failed!\n";

while (my $line = <BRCTL>) {

if ($line =~ /^$bridge\s+/) {

$bridge_found = 1;

last;

}

}

close(BRCTL);

return $bridge_found;

}

# Run qemu-image info on the base image and check if the size is sufficient.

sub check_image {

my($cloud_image, $disk_size) = @_;

unless (-x '/usr/bin/qemu-img') {

die "qemu-utils are missing!\n";

}

my($cloud_img_size, $img_fmt);

open(QEMU_IMG, "/usr/bin/qemu-img info $cloud_image |") || die "qemu-img info $cloud_image failed!\n";

while (my $line = <QEMU_IMG>) {

if ($line =~ /file format:\s+(qcow2|raw)/) {

$img_fmt = $1;

}

if ($line =~ /virtual size:\s+(\d+)/) {

$cloud_img_size = $1;

}

}

close(QEMU_IMG);

if ($cloud_img_size > $disk_size) {

die "Disk size ${disk_size}GB is smaller than the virtual size of disk image ${cloud_image}. It must be at least ${cloud_img_size}GB.\n";

}

return($img_fmt);

}

# Create the cloud-init ISO

sub create_ci_iso {

my($name, $user_data_file, $net_config_file) = @_;

unless (-x '/usr/bin/genisoimage') {

die "genisoimage is missing!\n";

}

mkdir("/tmp/${name}_ci");

system("cp $user_data_file /tmp/${name}_ci/user-data");

open(META_DATA, '>', "/tmp/${name}_ci/meta-data");

print META_DATA "instance-id: ${name}\nlocal-hostname: ${name}\n";

close(META_DATA);

my $genisoimage = "/usr/bin/genisoimage -quiet -output /tmp/${name}_ci.iso -V cidata -r -J /tmp/${name}_ci/user-data /tmp/${name}_ci/meta-data";

if (($net_config_file) && (-f $net_config_file )) {

rename($net_config_file, "/tmp/${name}_ci/network-config");

$genisoimage .= " /tmp/${name}_ci/network-config";

}

system($genisoimage) == 0 || die "genisoimage failed!\n";

}

# Generate a cloud-init network config file for a static IP address.

sub create_ci_net_config {

my($name, $nic_id, $ip, $gateway, $dns, $domain) = @_;

my $template = 'network-config.tt';

die "NIC identifier $nic_id for $name is invalid.\n"

unless ($nic_id =~ /^e(th|np)\d+(s\d+)?$/);

my $tt_vars = {

dns => $dns,

domain => $domain,

gateway => $gateway,

ip => $ip,

nic_id => $nic_id

};

&parse_tt($template, $tt_vars, "/tmp/${name}_ci_net_config");

}

# Validate OS type for virt-install with osinfo-query.

sub osinfo_query {

my $os_type = $_[0];

unless (-x '/usr/bin/osinfo-query') {

die "osinfo-query is missing!\n";

}

system("/usr/bin/osinfo-query os short-id=${os_type} > /dev/null") == 0 || die "OS type $os_type is invalid or osinfo-query failed! Please check.\n";

}

# Parse an Ansible inventory file

sub parse_ansible_inventory {

my $file = $_[0];

my $tt_vars = {

ansible_hosts => $_[1],

ansible_groups => $_[2]

};

my $template = 'ansible_inventory.tt';

print "Creating Ansible inventory file ${file}\n";

&parse_tt($template, $tt_vars, $file);

}

# Create a dnsmasq reservations file in /etc/dnsmasq.d

sub parse_hosts_file {

my $file = '/etc/dnsmasq.d/' . $_[0];

my $tt_vars = {

hosts => $_[1]

};

my $template = 'dnsmasq_hosts.tt';

print "Creating dnsmasq hosts file $file and restarting dnsmasq.\n";

&parse_tt($template, $tt_vars, $file);

system('/usr/bin/systemctl restart dnsmasq') == 0 || die "dnsmasq failed to restart!\n";

}

# Parse /etc/xen/hostname.cfg

sub parse_xen_conf {

my $name = $_[0];

my $tt_vars = {

name => $name,

bridge => $_[1],

ram => $_[2],

vcpu => $_[3],

disk_img => $_[4],

mac => $_[5],

add_disks => $_[6]

};

my $template = $_[7];

&parse_tt($template, $tt_vars, "/etc/xen/${name}.cfg");

}

# Add a libvirt network.

sub add_libvirt_net {

my($name, $bridge, $net, $mode, $domain, $dhcp) = @_;

die "Parameter mode for network $name is invalid. It must be nat or isolated.\n"

unless (grep { $_ eq $mode } qw( nat isolated ));

die "Parameter net for network $name is invalid. It must be in xxx.xxx.xxx format.\n"

unless ($net =~ /^(\d{1,3}\.){2}\d{1,3}$/);

if (system("/usr/bin/virsh net-info $name > /dev/null 2>&1") == 0) {

print "Network $name is already defined in libvirt. Skipping.\n";

} else {

die "Bridge interface $bridge is already in use!\n" if (&check_bridge($bridge) == 1);

my $tt_vars = {

bridge => $bridge,

dhcp => $dhcp,

domain => $domain,

mode => $mode,

name => $name,

net => $net,

};

my $template = 'libvirt_net.tt';

&parse_tt($template, $tt_vars, "${name}_net.xml");

system("/usr/bin/virsh net-define ${name}_net.xml > /dev/null") == 0 || die "virsh net-define ${name}_net.xml failed.\n";

system("/usr/bin/virsh net-start $name > /dev/null") == 0 || die "virsh net-start $name failed.\n";

system("/usr/bin/virsh net-autostart $name > /dev/null") == 0 || die "virsh net-autostart $name failed.\n";

unlink("${name}_net.xml");

print "Created network $name.\n";

}

}

# Delete a libvirt network.

sub del_libvirt_net {

my $name = $_[0];

if (system("/usr/bin/virsh net-info $name > /dev/null 2>&1") == 0) {

system("/usr/bin/virsh net-destroy $name > /dev/null && /usr/bin/virsh net-undefine $name > /dev/null");

}

}

Now for the script itself, prov_kvm_yaml.pl. The behavior is the same as the previous version, but it has been modified somewhat. The script assumes that all files (ProvVMs.pm, the template files, etc.) are in the current working directory, and that it will be run with sudo ./prov_kvm_yaml.pl yaml_file. It accepts the following options:

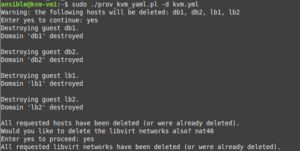

- -d: delete, instead of provision. It will require confirmation, similar to Terraform.

- -h comma-separated list of hosts: a comma-separated list of hosts to limit operations to. For example, when combined with -d, it will only delete the specified hosts.

The script will not overwrite an instance if it already exists; you must delete it first. This is when the -d and -h host1,host2,… options come in handy. The script won’t delete anything not present in the YAML file, so if you want to keep something from being deleted by mistake (an instance or an additional disk), simply remove it from the YAML file (or set skip: 1 on an instance to skip over that instance).

#!/usr/bin/perl -w

use strict;

use Getopt::Long;

use YAML::XS 'LoadFile';

use lib qw(.);

use ProvVMs;

my($delete, $help, $hosts);

GetOptions ("delete" => \$delete,

"hosts=s" => \$hosts

);

# Script requires specifying a project.yml or project.yaml file to process.

my $yaml_file = $ARGV[0] || die "You must specify a .yml or .yaml file.\n";

my $proj_name;

unless ($yaml_file =~ /^(\S+)\.(yaml|yml)$/) {

die "File must be a .yml or .yaml file.\n";

} else {

$proj_name = $1;

}

# Check if virsh and virt-install are present.

foreach my $prog (qw( virsh virt-install )){

die "$prog not found!\n" unless (-x "/usr/bin/${prog}");

}

die "YAML file $yaml_file not found!\n" unless (-f $yaml_file);

my $yaml = YAML::XS::LoadFile($yaml_file) || die "Unable to load YAML file!\n";

# If -h host1,host2... is specified, limit operations to the listed instances.

my @limit_hosts;

if ($hosts) {

@limit_hosts = split(/,/, $hosts);

}

my $default_disk_path = $yaml->{'disk_path'} || '/var/lib/libvirt/images';

my $dnsmasq = $yaml->{'dnsmasq'} || 0;

# The delete section of the script. When -d is specified without -h, everything is deleted.

# When -h host1,host2... is specified, only the listed hosts are deleted.

# After all operations are completed, the script exits.

if ($delete) {

my @hosts_delete;

if (@limit_hosts) {

foreach my $host (@limit_hosts) {

if ($yaml->{'instances'}->{$host}) {

push(@hosts_delete, $host);

} else {

warn "Warning: host $host not found in $yaml_file.\n";

}

}

} else {

@hosts_delete = sort(keys(%{$yaml->{'instances'}}));

if (($dnsmasq == 1) && (-f "/etc/dnsmasq.d/99_${proj_name}.conf")) {

unlink("/etc/dnsmasq.d/99_${proj_name}.conf");

system('/usr/bin/systemctl restart dnsmasq') == 0 || die "dnsmasq failed to restart!\n";

}

}

print 'Warning: the following hosts will be deleted: ' . join(', ', @hosts_delete) . "\n";

print 'Enter yes to continue: ';

chomp(my $selection = <STDIN>);

exit unless ($selection eq 'yes');

foreach my $host (@hosts_delete) {

# Set skip: 1 to skip over this instance.

if (($yaml->{'instances'}->{$host}->{'skip'}) && ($yaml->{'instances'}->{$host}->{'skip'} == 1)) {

print "Skipping instance $host because skip is set to 1.\n";

next;

}

print "Destroying guest $host.\n";

if (system("/usr/bin/virsh domstate $host 2>&1 |grep -q running") == 0) {

system("/usr/bin/virsh destroy $host") == 0 || die "virsh destroy $host failed!\n";

}

if (system("/usr/bin/virsh dominfo $host > /dev/null 2>&1") == 0) {

system("/usr/bin/virsh undefine $host > /dev/null");

} else {

print "Guest $host not found. Perhaps the guest was already deleted?\n";

}

my $disk_path = $yaml->{'instances'}->{$host}->{'disk_path'} || $default_disk_path;

unlink("${disk_path}/${host}.qcow2") if (-f "${disk_path}/${host}.qcow2");

if ($yaml->{'instances'}->{$host}->{'additional_disks'}) {

while (my($disk_name, $disk_params) = each %{$yaml->{'instances'}->{$host}->{'additional_disks'}}) {

my $add_disk_path = $disk_params->{'path'} || $disk_path;

unlink("${add_disk_path}/${host}_${disk_name}.qcow2") if (-f "${add_disk_path}/${host}_${disk_name}.qcow2");

}

}

}

print "All requested hosts have been deleted (or were already deleted).\n";

if (($yaml->{'networks'}) && !(@limit_hosts)) {

print "Would you like to delete the libvirt networks also? " . join(', ', keys(%{$yaml->{'networks'}})) . "\n";

print 'Enter yes to proceed: ';

chomp(my $selection = <STDIN>);

exit unless ($selection eq 'yes');

foreach my $network (keys(%{$yaml->{'networks'}})) {

&del_libvirt_net($network);

}

print "All requested libvirt networks have been deleted (or were already deleted).\n";

}

exit;

}

# All code below here is used for provisioning/creating hosts.

my $domain = $yaml->{'domain'} || 'localdomain';

# Creating libvirt network(s) if defined.

my $networks = $yaml->{'networks'};

if ($networks) {

print "Creating libvirt networks.\n";

while (my($net, $vals) = each %{$networks}) {

die "You must define a bridge for network $net.\n" unless ($vals->{'bridge'});

die "You must define a net for network $net in xxx.xxx.xxx format.\n" unless ($vals->{'net'});

my $mode = $vals->{'mode'} || 'nat';

&add_libvirt_net($net, $vals->{'bridge'}, $vals->{'net'}, $mode, $domain);

}

}

my $default_network = $yaml->{'default_network'};

if (($default_network) && !($networks->{$default_network})) {

die "Default network $default_network is invalid!\n";

}

my $default_bridge;

# Define the default bridge. default_network takes precedence over default_bridge.

if (($default_network) && ($networks->{$default_network}->{'bridge'})) {

$default_bridge = $networks->{$default_network}->{'bridge'};

} else {

if ($yaml->{'default_bridge'}) {

$default_bridge = $yaml->{'default_bridge'};

} else {

print "No default bridge or network is set in the YAML file. Using br0.\n";

$default_bridge = 'br0';

}

# Only check bridge status if this isn't a libvirt network.

die "Bridge interface $default_bridge not present!\n" unless (&check_bridge($default_bridge) == 1);

}

# Set to enp1s0, which is the default for the Debian cloud image.

my $default_nic_id = 'enp1s0';

# Create default disk path

unless (-d $default_disk_path) {

system("mkdir -p $default_disk_path");

}

# Read in default image file from the YAML file.

my $default_image;

unless ($yaml->{'default_image'}) {

warn "Warning: default_image not set in $yaml_file. You must set image: <image> for each instance.\n";

} else {

$default_image = $yaml->{'default_image'};

die "Image $default_image not found!\n" unless (-f $default_image);

}

# Set other defaults.

my $default_disk_size = $yaml->{'default_disk_size'} || 10;

my $default_ram = $yaml->{'default_ram'} || 1024;

my $default_vcpu = $yaml->{'default_vcpu'} || 1;

my $ansible_inventory = $yaml->{'ansible_inventory'} || 0;

my $default_os_type = $yaml->{'default_os_type'} || 'linux2020';

my $default_user_data_file = $yaml->{'default_user_data_file'} || 'user-data';

die "Default user-data file $default_user_data_file not found!\n" unless (-f $default_user_data_file);

&osinfo_query($default_os_type);

# Processing the instances hash in the YAML file.

my($ansible_groups, $instances, $dnsmasq_hosts, $ansible_hosts);

my(@static_ips, @checked_bridges, @checked_os_types);

print "Creating disk images for any new instances.\n";

while (my($inst, $vals) = each %{$yaml->{'instances'}}) {

my $skip = 0;

# Set skip: 1 to skip over this instance.

if (($vals->{'skip'}) && ($vals->{'skip'} == 1)) {

print "Skipping instance $inst because skip is set to 1.\n";

$skip = 1;

}

# Skip if -h host1,host2 is set and host isn't in that list.

if ((@limit_hosts) && !(grep { $_ eq $inst } @limit_hosts)) {

$skip = 1;

}

# Skip over VM if it already exists.

if (system("/usr/bin/virsh dominfo $inst > /dev/null 2>&1") == 0) {

print "Skipping instance $inst because it has already been provisioned.\n";

$skip = 1;

}

# Allow setting domain: <domain> on an individual instance.

my $inst_domain = $vals->{'domain'} || $domain;

$ansible_hosts->{"${inst}.${inst_domain}"} = {};

#

# Instance/VM networking section.

# ip: <ip> is optional, as you may want to configure the DHCP reservation elsewhere.

# Set is_static: 1 to configure a static IP. Set gateway: ip and dns: ip to set

# the default gateway and DNS server for the host. Otherwise <subnet>.1 will be used for

# both. If dnsmasq is managed on the VM host by this script, add the IP to the hosts

# hash. If libvirt networking is used, no action is taken other than to validate the IP.

if ($vals->{'ip'}) {

&validate_ip($vals->{'ip'});

if (($skip == 0) && (grep { $_ eq $vals->{'ip'}} @static_ips)) {

die "IP has already been used for another instance! Please check YAML file.\n"

}

if ($dnsmasq == 1) {

$dnsmasq_hosts->{"${inst}.${inst_domain}"} = $vals->{'ip'};

}

$ansible_hosts->{"${inst}.${inst_domain}"}->{'ip'} = $vals->{'ip'};

# If skip is 0, generate a cloud-init network config file.

if ($skip == 0) {

my $subnet = &get_subnet($vals->{'ip'});

my $gateway = $vals->{'gateway'} || "${subnet}.1";

my $dns = $vals->{'dns'} || $gateway;

my $nic_id = $vals->{'nic_id'} || $default_nic_id;

&create_ci_net_config($inst, $nic_id, $vals->{'ip'}, $gateway, $dns, $inst_domain);

}

push(@static_ips, $vals->{'ip'});

} else {

$ansible_hosts->{"${inst}.${inst_domain}"}->{'ip'} = '';

}

# Skip over instance if skip flag gets set.

next if ($skip == 1);

# Generate a MAC address if not defined with mac: <mac> in the YAML file.

my $mac = $vals->{'mac'} || &mac_gen;

# Define an instance's bridge interface. The network parameter takes

# precedence over the bridge parameter. The network must be defined in

# the YAML file also or it will throw an error. The bridge parameter on

# an individual instance takes precedence over the default bridge or network.

# If a bridge is specified, the script will check to see if it exists.

my $bridge;

my $network = $default_network;

if ($vals->{'network'}) {

die "Network not defined in the YAML file.\n" unless ($networks->{$vals->{'network'}});

# We do not need to check if the bridge is valid here, as this has been done already.

$bridge = $networks->{$vals->{'network'}}->{'bridge'};

$network = $vals->{'network'};

} else {

if ($vals->{'bridge'}) {

$bridge = $vals->{'bridge'};

unless (grep { $_ eq $bridge } @checked_bridges) {

if ((&check_bridge($bridge)) == 1) {

push(@checked_bridges, $bridge);

} else {

die "Bridge interface $bridge not present!\n";

}

}

} else {

$bridge = $default_bridge;

}

}

print "Creating disk(s) for $inst.\n";

if (!($default_image) && !($vals->{'image'})) {

die "No default image was defined nor was an image set for instance ${inst}. Please check and try again.\n";

}

# Use the default image if one isn't set for the individual instance.

my $image = $default_image;

if ($vals->{'image'}) {

$image = $vals->{'image'};

die "Image $image not found!\n" unless (-f $image);

}

my $disk_size = $vals->{'disk'} || $default_disk_size;

# Make sure that image exists and can be resized to the specified size.

&check_image($image, $disk_size);

# Use the default OS type if one isn't set for the individual instance.

# If one is specified, make sure that it is valid.

my $os_type = $default_os_type;

if ($vals->{'os_type'}) {

$os_type = $vals->{'os_type'};

unless (grep { $_ eq $os_type } @checked_os_types) {

push(@checked_os_types, $os_type) if (&osinfo_query($os_type));

}

}

# Use the default disk path if one isn't set for the individual instance.

# If one is specified, make sure that it exists.

my $disk_path = $default_disk_path;

if ($vals->{'disk_path'}) {

$disk_path = $vals->{'disk_path'};

die "Disk path $disk_path doesn't exist! Please check.\n" unless (-d $disk_path);

}

# Copy the cloud image to file for the instance.

my $disk_image = "${disk_path}/${inst}.qcow2";

# Change this to use rsync if you want a progress bar.

system("cp $image $disk_image") == 0 || die "cp $image $disk_image failed!\n";

system("/usr/bin/qemu-img resize -q -f qcow2 $disk_image ${disk_size}G") == 0

|| die "qemu-img resize -q -f qcow2 ${disk_image} ${disk_size}G failed!\n";

# Processing the additional_disks section for the instance, if specified.

# It follows the format of:

# name:

# size: 30

# path: <directory>

# Size is in GB.

# Note: this doesn't format the disk inside the instance. You must use cloud-init or do it manually.

my @additional_disks;

if ($vals->{'additional_disks'}) {

while (my($disk_name, $disk_params) = each %{$vals->{'additional_disks'}}) {

unless (($disk_params->{'size'}) && ($disk_params->{'size'} =~ /^\d+$/)) {

die "You must specify a size in GB for disk ${disk_name}, instance ${inst}!\n";

}

my $size = $disk_params->{'size'};

my $add_disk_path = $disk_path;

if ($disk_params->{'path'}) {

$add_disk_path = $disk_params->{'path'};

die "Additional disk path $add_disk_path not found! Please check.\n" unless (-d $add_disk_path);

}

system("/usr/bin/qemu-img create -q -f qcow2 ${add_disk_path}/${inst}_${disk_name}.qcow2 ${size}G") == 0 ||

die "qemu-img create -q -f qcow2 ${add_disk_path}/${inst}_${disk_name}.qcow2 ${size}G failed!\n";

push(@additional_disks, "${add_disk_path}/${inst}_${disk_name}.qcow2");

}

}

# Use the default cloud-init user-data file unless one is specified for the instance.

my $user_data_file = $default_user_data_file;

if ($vals->{'user_data_file'}) {

$user_data_file = $vals->{'user_data_file'};

die "user-data file $user_data_file not found!\n" unless (-f $user_data_file);

}

# Create the cloud-init meta-data file for the instance.

open(META_DATA, '>', "/tmp/${inst}_meta-data");

print META_DATA "instance-id: ${inst}\nlocal-hostname: ${inst}\n";

close(META_DATA);

# For the Ansible inventory file. If ansible_groups is specified for the instance,

# add the instance to that group.

if ($vals->{'ansible_groups'}) {

foreach my $group (@{$vals->{'ansible_groups'}}) {

unless ($ansible_groups->{$group}) {

@{$ansible_groups->{$group}} = ();

}

push(@{$ansible_groups->{$group}}, "${inst}.${inst_domain}");

}

}

$instances->{$inst} = {

'bridge' => $bridge,

'network' => $network,

'ip' => $vals->{'ip'},

'domain' => $inst_domain,

'ram' => $vals->{'ram'} || $default_ram,

'disk_image' => $disk_image,

'vcpu' => $vals->{'vcpu'} || $default_vcpu,

'mac' => $mac,

'os_type' => $os_type,

'user_data_file' => $user_data_file,

'additional_disks' => \@additional_disks

};

}

# If dnsmasq_hosts is defined, configure the hosts file.

if ($dnsmasq_hosts) {

&parse_hosts_file("99_${proj_name}.conf", $dnsmasq_hosts);

}

# If ansible_inventory: 1 is set in the YAML file, process the Ansible inventory template <project>_inventory.

if ($ansible_inventory == 1) {

&parse_ansible_inventory("${proj_name}_inventory", $ansible_hosts, $ansible_groups);

}

# Run virt-install for each VM.

while (my($inst, $vals) = each %{$instances}) {

# Set a libvirt DNS record if network is defined

if (($vals->{'network'}) && ($vals->{'ip'})) {

my $virsh_net_update = '/usr/bin/virsh net-update ' . $vals->{'network'} . ' add-last dns-host ';

$virsh_net_update .= '\'<host ip="' . $vals->{'ip'} . '">';

$virsh_net_update .= "<hostname>${inst}." . $vals->{'domain'} . '</hostname></host>\' --live --config';

system("$virsh_net_update > /dev/null") == 0 || warn "Creation of libvirt DNS record for instance $inst failed.\n";

#print "$virsh_net_update\n";

}

my $virt_install_cmd = "/usr/bin/virt-install --name $inst";

$virt_install_cmd .= ' --os-variant ' . $vals->{'os_type'};

$virt_install_cmd .= ' --memory ' . $vals->{'ram'};

$virt_install_cmd .= ' --vcpus ' . $vals->{'vcpu'};

$virt_install_cmd .= ' --import --noautoconsole';

$virt_install_cmd .= ' --network bridge=' . $vals->{'bridge'} . ',mac=' . $vals->{'mac'};

$virt_install_cmd .= ' --cloud-init user-data=' . $vals->{'user_data_file'} . ",meta-data=/tmp/${inst}_meta-data,disable=on";

if (($vals->{'ip'}) && (-f "/tmp/${inst}_ci_net_config")) {

$virt_install_cmd .= ",network-config=/tmp/${inst}_ci_net_config";

}

$virt_install_cmd .= ' --disk ' . $vals->{'disk_image'};

if ($vals->{'additional_disks'}) {

foreach my $disk (@{$vals->{'additional_disks'}}) {

$virt_install_cmd .= " --disk $disk";

}

}

print "Provisioning instance ${inst}.\n";

system("$virt_install_cmd > /dev/null") == 0 || die "virt-install failed to run for ${inst}! Check output above.\n";

#print "$virt_install_cmd\n";

if (($vals->{'autostart'}) && ($vals->{'autostart'} == 1)) {

print "Setting instance $inst to be autostarted as requested.\n";

system("/usr/bin/virsh autostart $inst > /dev/null") || die "virsh autostart failed to run for ${inst}! Check output above.\n";

}

}

Updating my Xen provisioning script to be compatible with ProvVMs.pm

One of the reasons why I put my subroutines in module was to make them available to both my Xen and KVM scripts. As the ProvVMs module was modified significantly, including removing the Dnsmasq DHCP reservation functionality, I needed to make the prov_xen_yaml.pl script that I created in this post compatible with it. The only file that had to be modifed for Xen was the script itself; all other files are the same as the KVM versions. The Xen script needs a .yml file, prov_xen_yaml.pl, ProvVMs.pm, network-config.tt, and user-data; ansible_inventory.tt and dnsmasq_hosts.tt are optional if Ansible and Dnsmasq will be used. As of this writing, my Xen script can only use bridged networks. I would like to explore using Xen with NAT networks in the future, possible with libvirt. Below is a sample .yml file and the updated version of prov_xen_yaml.pl.

---

default_network: br0

default_image: /nfs/images/debian-13-generic-amd64.raw

domain: ridpath.lab

ansible_inventory: 1

instances:

db1:

ip: 192.168.40.30

ram: 2048

disk: 20

user_data_file: /usr/local/etc/user-data_mysql

additional_disks:

data:

size: 30

ansible_groups:

- db

db2:

ip: 192.168.40.31

ram: 2048

disk: 20

user_data_file: /usr/local/etc/user-data_mysql

additional_disks:

data:

size: 30

ansible_groups:

- db

web1:

ip: 192.168.10.33

network: dmz

ram: 1024

disk: 10

ansible_groups:

- web

web2:

ip: 192.168.10.34

network: dmz

ram: 1024

disk: 10

ansible_groups:

- web

#!/usr/bin/perl -w

use strict;

use Getopt::Long;

use YAML::XS 'LoadFile';

use lib qw(.);

use ProvVMs;

my($delete, $help, $hosts);

GetOptions ("delete" => \$delete,

"hosts=s" => \$hosts

);

# Script requires specifying a project.yml or project.yaml file to process.

my $yaml_file = $ARGV[0] || die "You must specify a .yml or .yaml file.\n";

my $proj_name;

unless ($yaml_file =~ /^(\S+)\.(yaml|yml)$/) {

die "File must be a .yml or .yaml file.\n";

} else {

$proj_name = $1;

}

die "YAML file $yaml_file not found!\n" unless (-f $yaml_file);

my $yaml = YAML::XS::LoadFile($yaml_file) || die "Unable to load YAML file!\n";

# If -h host1,host2... is specified, limit operations to the listed instances.

my @limit_hosts;

if ($hosts) {

@limit_hosts = split(/,/, $hosts);

}

my $default_disk_path = $yaml->{'disk_path'} || '/srv/xen';

my $dnsmasq = $yaml->{'dnsmasq'} || 0;

# The delete section of the script. When -d is specified without -h, everything is deleted.

# When -h host1,host2... is specified, only the listed hosts are deleted.

# After all operations are completed, the script exits.

if ($delete) {

my @hosts_delete;

if (@limit_hosts) {

foreach my $host (@limit_hosts) {

if ($yaml->{'instances'}->{$host}) {

push(@hosts_delete, $host);

} else {

warn "Warning: instance $host not found in $yaml_file.\n";

}

}

} else {

@hosts_delete = sort(keys(%{$yaml->{'instances'}}));

if (($dnsmasq == 1) && (-f "/etc/dnsmasq.d/99_${proj_name}.conf")) {

unlink("/etc/dnsmasq.d/99_${proj_name}.conf");

system('/usr/bin/systemctl restart dnsmasq') == 0 || die "dnsmasq failed to restart!\n";

}

}

print 'Warning: the following instances will be deleted: ' . join(', ', @hosts_delete) . "\n";

print 'Enter yes to continue: ';

chomp(my $selection = <STDIN>);

exit unless ($selection eq 'yes');

foreach my $host (@hosts_delete) {

# Set skip: 1 to skip over this instance.

if (($yaml->{'instances'}->{$host}->{'skip'}) && ($yaml->{'instances'}->{$host}->{'skip'} == 1)) {

print "Skipping instance $host because skip is set to 1.\n";

next;

}

print "Destroying instance $host.\n";

if (system("/usr/sbin/xl list $host > /dev/null 2>&1") == 0) {

system("/usr/sbin/xl destroy $host") == 0 || die "xl destroy $host failed!\n";

}

unlink("/etc/xen/auto/${host}.cfg") if (-f "/etc/xen/auto/${host}.cfg");

if (-f "/etc/xen/${host}.cfg") {

unlink("/etc/xen/${host}.cfg");

} else {

print "/etc/xen/${host}.cfg not found. Perhaps the instance was already deleted?\n";

}

my $disk_path = $yaml->{'instances'}->{$host}->{'disk_path'} || $default_disk_path;

unlink("${disk_path}/${host}.raw") if (-f "${disk_path}/${host}.raw");

if ($yaml->{'instances'}->{$host}->{'additional_disks'}) {

while (my($disk_name, $disk_params) = each %{$yaml->{'instances'}->{$host}->{'additional_disks'}}) {

my $add_disk_path = $disk_params->{'path'} || $disk_path;

my $format = $disk_params->{'format'} || 'raw';

unlink("${add_disk_path}/${host}_${disk_name}.${format}") if (-f "${add_disk_path}/${host}_${disk_name}.${format}");

}

}

}

print "All requested instances have been deleted (or were already deleted).\n";

exit;

}

# All code below here is used for provisioning/creating instances.

# Read in default bridge from the YAML file and check if it exists with brctl.

my $default_bridge = 'br0';

unless ($yaml->{'default_bridge'}) {

warn "Warning: default_bridge not set in $yaml_file. Using br0.\n";

} else {

$default_bridge = $yaml->{'default_bridge'};

}

die "Bridge interface $default_bridge not present!\n" unless (&check_bridge($default_bridge) == 1);

# Set to enp1s0, which is the default for the Debian cloud image.

my $default_nic_id = 'enX0';

# Create default disk path and /etc/xen/auto if necessary.

unless (-d $default_disk_path) {

system("mkdir -p $default_disk_path");

}

unless (-d '/etc/xen/auto') {

mkdir('/etc/xen/auto');

}

# Read in default image file from the YAML file.

my $default_image;

unless ($yaml->{'default_image'}) {

warn "Warning: default_image not set in $yaml_file. You must set image: <image> for each instance.\n";

} else {

$default_image = $yaml->{'default_image'};

die "Image $default_image not found!\n" unless (-f $default_image);

}

# Set other defaults.

my $default_disk_size = $yaml->{'default_disk_size'} || 10;

my $default_ram = $yaml->{'default_ram'} || 1024;

my $default_vcpu = $yaml->{'default_vcpu'} || 1;

my $domain = $yaml->{'domain'} || 'localdomain';

my $ansible_inventory = $yaml->{'ansible_inventory'} || 0;

my $default_user_data_file = $yaml->{'default_user_data_file'} || 'user-data';

die "Default user-data file $default_user_data_file not found!\n" unless (-f $default_user_data_file);

# Processing the instances hash in the YAML file.

my($ansible_groups, $instances, $dnsmasq_hosts, $ansible_hosts);

my(@static_ips, @checked_bridges);

print "Creating disk images for any new instances.\n";

while (my($inst, $vals) = each %{$yaml->{'instances'}}) {

my $ci_net_config;

my $skip = 0;

# Set skip: 1 to skip over this instance.

if (($vals->{'skip'}) && ($vals->{'skip'} == 1)) {

print "Skipping instance $inst because skip is set to 1.\n";

$skip = 1;

}

# Skip if -h host1,host2 is set and host isn't in that list.

if ((@limit_hosts) && !(grep { $_ eq $inst } @limit_hosts)) {

$skip = 1;

}

# Skip instance if its Xen config file exists.

if (-f "/etc/xen/${inst}.cfg") {

print "Skipping instance $inst because it has already been provisioned.\n";

$skip = 1;

}

# Allow setting domain: <domain> on an individual instance.

my $inst_domain = $vals->{'domain'} || $domain;

$ansible_hosts->{"${inst}.${inst_domain}"} = {};

# ip: <ip> is optional, as you may want to configure the DHCP reservation elsewhere.

if ($vals->{'ip'}) {

if (($skip == 0) && (grep { $_ eq $vals->{'ip'}} @static_ips)) {

die "IP has already been used for another instance! Please check YAML file.\n"

}

if ($dnsmasq == 1) {

$dnsmasq_hosts->{"${inst}.${inst_domain}"} = $vals->{'ip'};

}

$ansible_hosts->{"${inst}.${inst_domain}"}->{'ip'} = $vals->{'ip'};

# If skip is 0, generate a cloud-init network config file.

if ($skip == 0) {

my $subnet = &get_subnet($vals->{'ip'});

my $gateway = $vals->{'gateway'} || "${subnet}.1";

my $dns = $vals->{'dns'} || $gateway;

my $nic_id = $vals->{'nic_id'} || $default_nic_id;

&create_ci_net_config($inst, $nic_id, $vals->{'ip'}, $gateway, $dns, $inst_domain);

$ci_net_config = "/tmp/${inst}_ci_net_config";

}

push(@static_ips, $vals->{'ip'});

} else {

$ansible_hosts->{"${inst}.${inst_domain}"}->{'ip'} = '';

}

# Skip over instance if skip flag gets set.

next if ($skip == 1);

# Generate a MAC address if not defined with mac: <mac> in the YAML file.

my $mac = $vals->{'mac'} || &mac_gen;

# Use the default bridge if one isn't set for the individual instance.

# If one is specified, make sure that it exists.

my $bridge = $default_bridge;

if ($vals->{'bridge'}) {

$bridge = $vals->{'bridge'};

unless (grep { $_ eq $bridge } @checked_bridges) {

push(@checked_bridges, $bridge) if (&check_bridge($bridge));

}

}

print "Creating instance $inst.\n";

if (!($default_image) && !($vals->{'image'})) {

die "No default image was defined nor was an image set for instance ${inst}. Please check and try again.\n";

}

# Use the default image if one isn't set for the individual instance.

my $image = $default_image;

if ($vals->{'image'}) {

$image = $vals->{'image'};

die "Image $image not found!\n" unless (-f $image);

}

my $disk_size = $vals->{'disk'} || $default_disk_size;

# Make sure that image exists and can be resized to the specified size.

&check_image($image, $disk_size);

# Use the default disk path if one isn't set for the individual instance.

# If one is specified, make sure that it exists.

my $disk_path = $default_disk_path;

if ($vals->{'disk_path'}) {

$disk_path = $vals->{'disk_path'};

die "Disk path $disk_path doesn't exist! Please check.\n" unless (-d $disk_path);

}

# Copy the cloud image to file for the instance.

my $disk_image = "${disk_path}/${inst}.raw";

# Change this to use rsync if you want a progress bar. It takes some time.

system("cp $image $disk_image") == 0 || die "cp $image $disk_image failed!\n";

system("/usr/bin/qemu-img resize -q -f raw $disk_image ${disk_size}G") == 0 || die "qemu-img resize -q -f raw ${disk_image} ${disk_size}G failed!\n";

# Processing the additional_disks section for the instance, if specified.

# It follows the format of:

# name:

# size: 30

# format: qcow2|raw

# path: <directory>

# Size is in GB and format must be raw or qcow2.

# Note: this doesn't format the disk inside the instance. You must use cloud-init or do it manually.

my @disk_ids = map { "xvd${_}" } ('b' .. 'z');

my @additional_disks;

if ($vals->{'additional_disks'}) {

while (my($disk_name, $disk_params) = each %{$vals->{'additional_disks'}}) {

die "You must specify a size in GB for disk ${disk_name}, instance ${inst}!\n" unless (($disk_params->{'size'}) && ($disk_params->{'size'} =~ /^\d+$/));

my $size = $disk_params->{'size'};

# Default to raw if format isn't specified.

my $format = $disk_params->{'format'} || 'raw';

die "'format' for must be raw or qcow2 or disk ${disk_name}, instance ${inst}!\n" unless (grep { $_ eq $format } qw(raw qcow2));

my $add_disk_path = $disk_path;

if ($disk_params->{'path'}) {

$add_disk_path = $disk_params->{'path'};

die "Additional disk path $add_disk_path not found! Please check.\n" unless (-d $add_disk_path);

}

my $id = shift(@disk_ids);

system("/usr/bin/qemu-img create -q -f $format ${add_disk_path}/${inst}_${disk_name}.${format} ${size}G") == 0 ||

die "qemu-img create -q -f $format ${add_disk_path}/${inst}_${disk_name}.${format} ${size}G failed!\n";

push(@additional_disks, "'${add_disk_path}/${inst}_${disk_name}.${format},${format},${id},w'");

}

}

# Use the default cloud-init user-data file unless one is specified for the instance.

my $user_data_file = $default_user_data_file;

if ($vals->{'user_data_file'}) {

$user_data_file = $vals->{'user_data_file'};

die "user-data file $user_data_file not found!\n" unless (-f $user_data_file);

}

# For the Ansible inventory file. If ansible_groups is specified for the instance,

# add the instance to that group.

if ($vals->{'ansible_groups'}) {

foreach my $group (@{$vals->{'ansible_groups'}}) {

unless ($ansible_groups->{$group}) {

@{$ansible_groups->{$group}} = ();

}

push(@{$ansible_groups->{$group}}, "${inst}.${inst_domain}");

}

}

# Create the cloud-init ISO for the instance and add it to the additional_disks array.

&create_ci_iso($inst, $user_data_file, $ci_net_config);

my $ci_disk_id = shift(@disk_ids);

push(@additional_disks, "'/tmp/${inst}_ci.iso,,${ci_disk_id},cdrom'");

$instances->{$inst} = {

'bridge' => $bridge,

'ram' => $vals->{'ram'} || $default_ram,

'disk_image' => $disk_image,

'vcpu' => $vals->{'vcpu'} || $default_vcpu,

'mac' => $mac,

'additional_disks' => \@additional_disks

};

}

# If dnsmasq_hosts is defined, configure the hosts file.

if ($dnsmasq_hosts) {

&parse_hosts_file("99_${proj_name}.conf", $dnsmasq_hosts);

}

# If ansible_inventory: 1 is set in the YAML file, process the Ansible inventory template <project>_inventory.

if ($ansible_inventory == 1) {

&parse_ansible_inventory("${proj_name}_inventory", 'ansible_inventory.tt', $ansible_hosts, $ansible_groups);

}

# Start each instance with the cloud-init ISO attached. Then re-parse the Xen config file to remove the cloud-init disk for future start-ups.

while (my($inst, $vals) = each %{$instances}) {

my $additional_disks_list = join(', ', @{$vals->{'additional_disks'}});

# Parse with the cloud-init ISO.

&parse_xen_conf($inst, $vals->{'bridge'}, $vals->{'ram'}, $vals->{'vcpu'}, $vals->{'disk_image'}, $vals->{'mac'}, $additional_disks_list, 'xen.tt');

print "Starting instance ${inst}.\n";

system("/usr/sbin/xl create /etc/xen/${inst}.cfg") == 0 || die "xl create command failed to run for ${inst}! Check output above.\n";

# The cloud-init ISO will be the last disk in the array. This removes it and "re-joins" the additional disk list.

pop(@{$vals->{'additional_disks'}});

if (@{$vals->{'additional_disks'}}) {

$additional_disks_list = join(', ', @{$vals->{'additional_disks'}});

} else {

$additional_disks_list = '';

}

# Parse without the cloud-init ISO.

&parse_xen_conf($inst, $vals->{'bridge'}, $vals->{'ram'}, $vals->{'vcpu'}, $vals->{'disk_image'}, $vals->{'mac'}, $additional_disks_list, 'xen.tt');

if (($vals->{'autostart'}) && ($vals->{'autostart'} == 1)) {

print "Setting VM to be autostarted as requested.\n";

symlink("/etc/xen/${inst}.cfg", "/etc/xen/auto/${inst}.cfg");

}

}

Conclusion

I realize that this post was merely a re-write of stuff that I have shared before. At the same time, I felt that my previous script was really lacking by not having libvirt networking support. In a future post I would like to export using libvirt with Xen. I’ll admit, the scripts shared here probably have little practical use, as there are better tools out there for accomplishing the same thing. However, the main point of writing these was to learn more about libvirt and Perl. I am not a very good programmer and the scripts I write mainly serve to help me improve my programming skills. In any case, I hope that someone can learn from this post and get some ideas. As always, thanks for reading!